Key takeaway: Introduces early-exit policies that reduce point-cloud inference latency while preserving accuracy on difficult inputs.

AI for Wireless

Methods for lower-latency and lower-footprint wireless intelligence, including early exits, pruning, few-shot transfer, and compression.

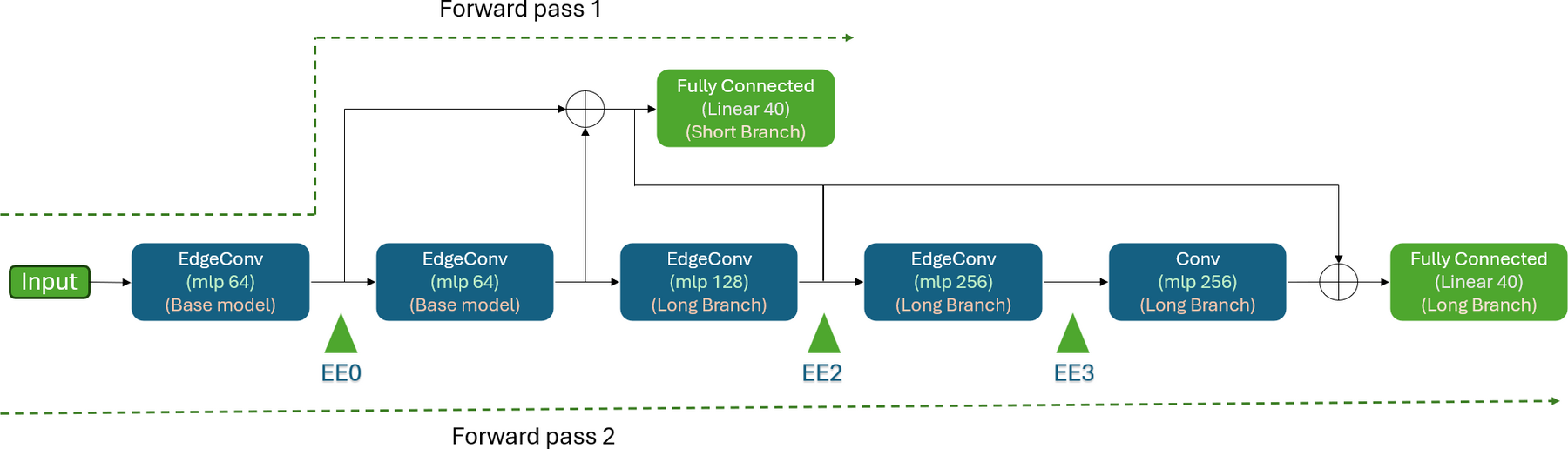

Key takeaway: Introduces early-exit policies that reduce point-cloud inference latency while preserving accuracy on difficult inputs.

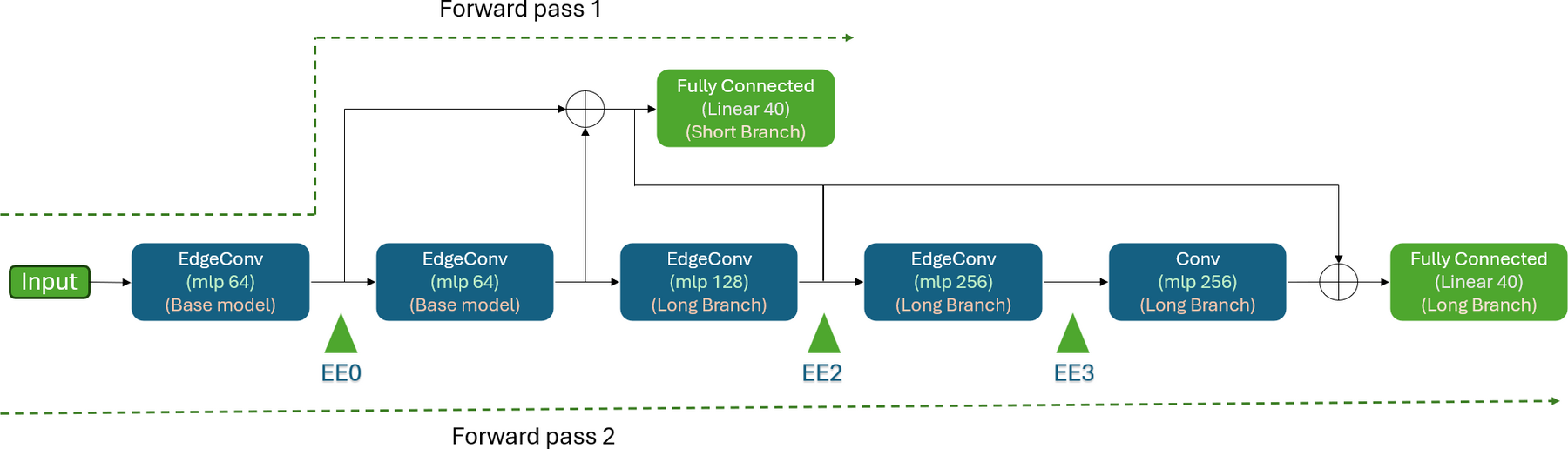

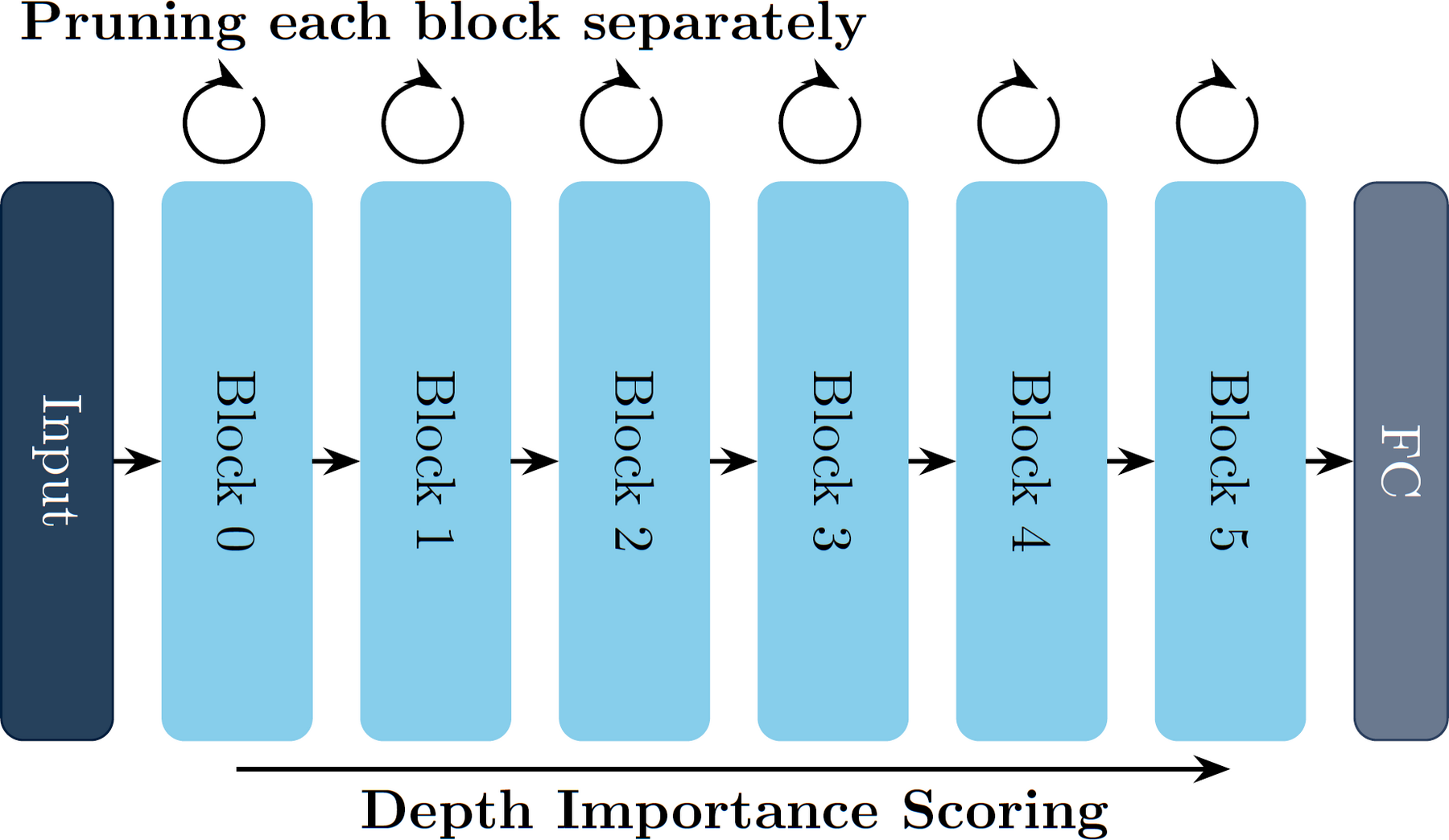

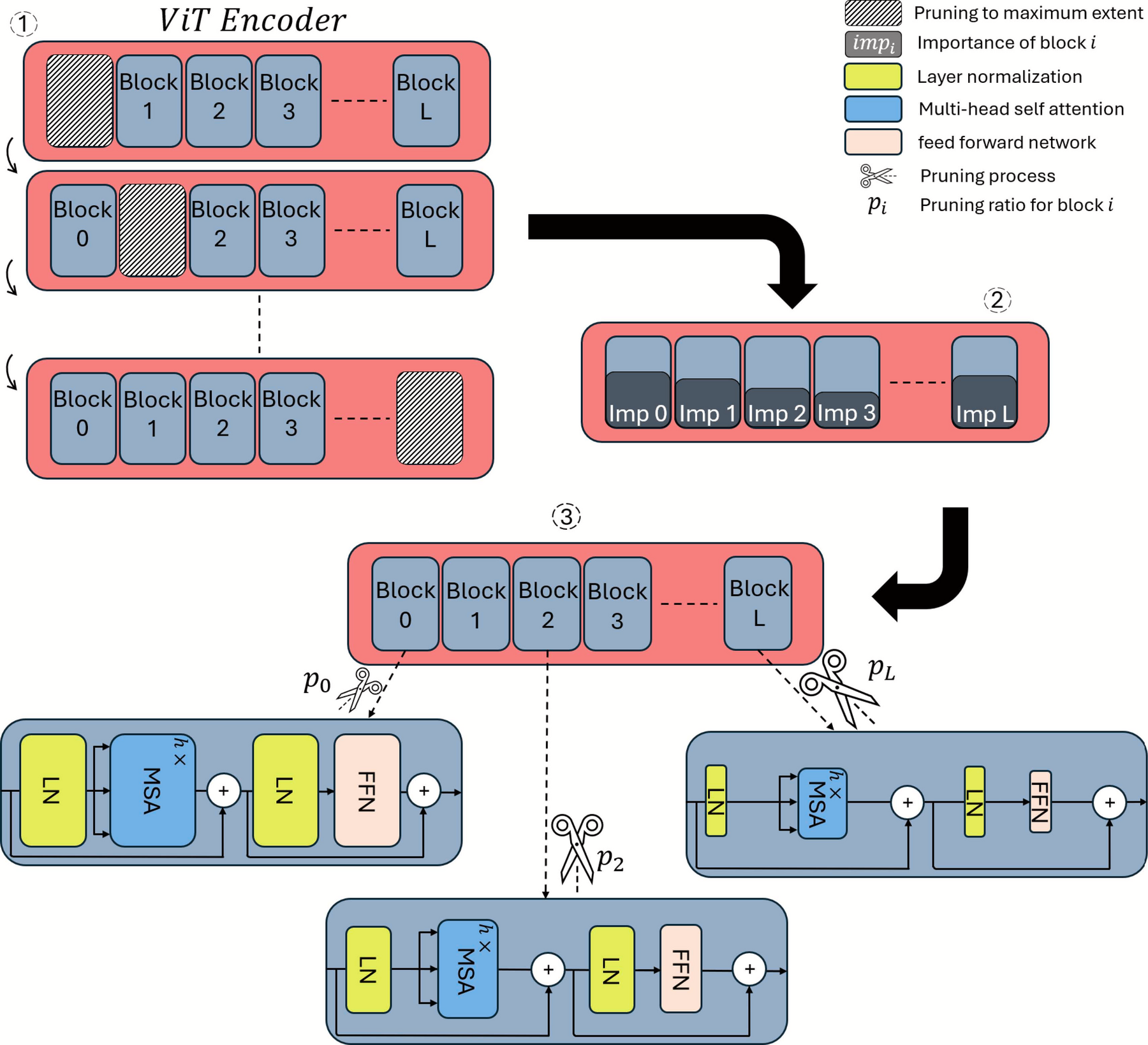

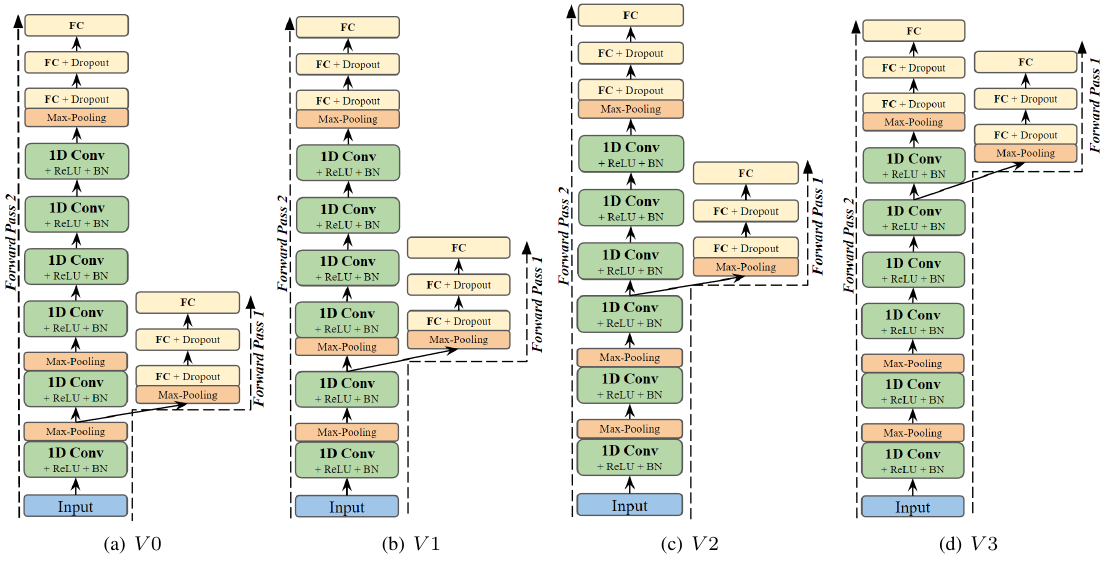

Key takeaway: Combines structured pruning with early exits to characterize practical accuracy-cost tradeoffs for vehicular AMC.

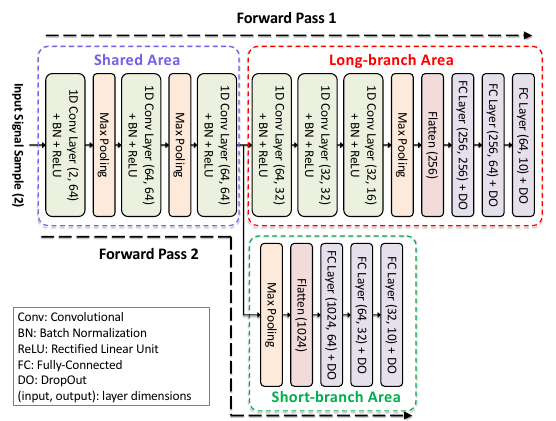

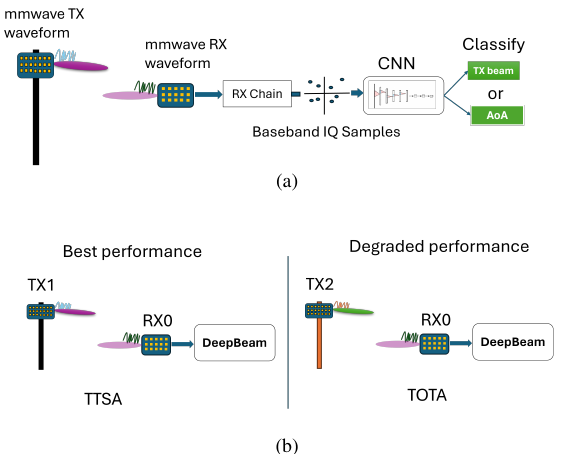

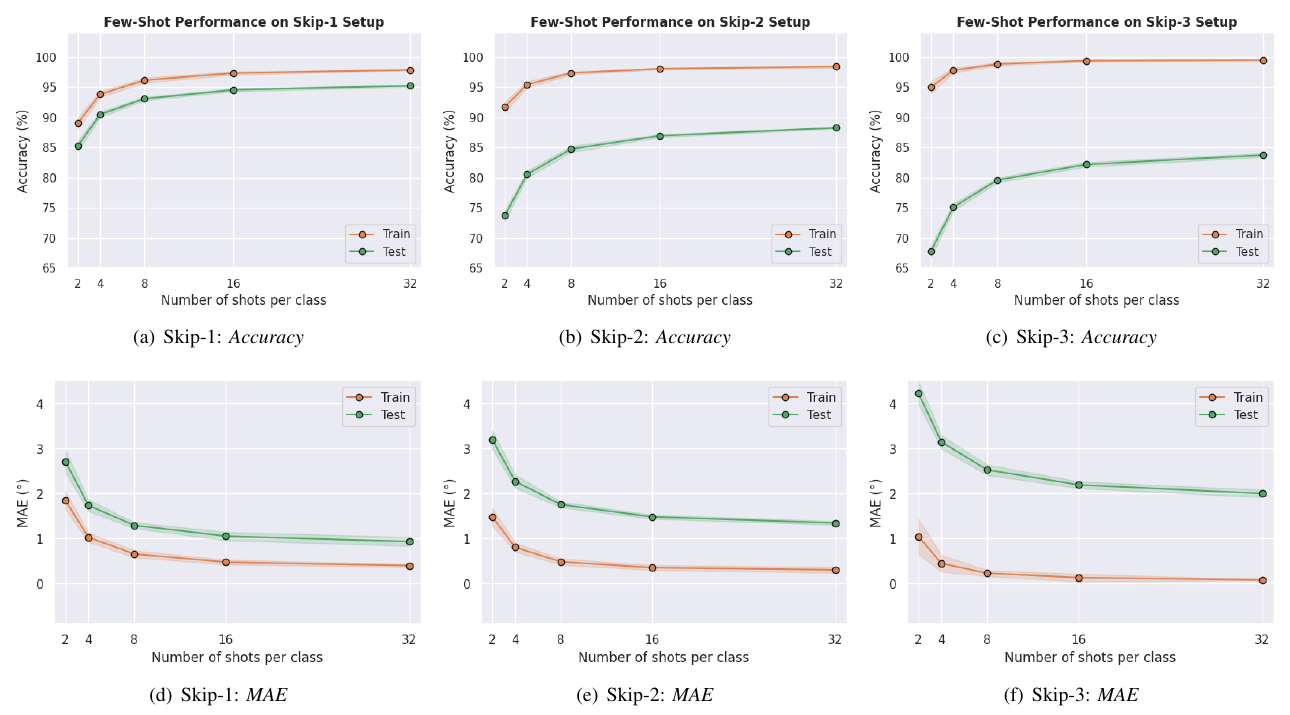

Key takeaway: Uses few-shot learning to generalize AoA estimation to new setups with low data and lightweight inference.

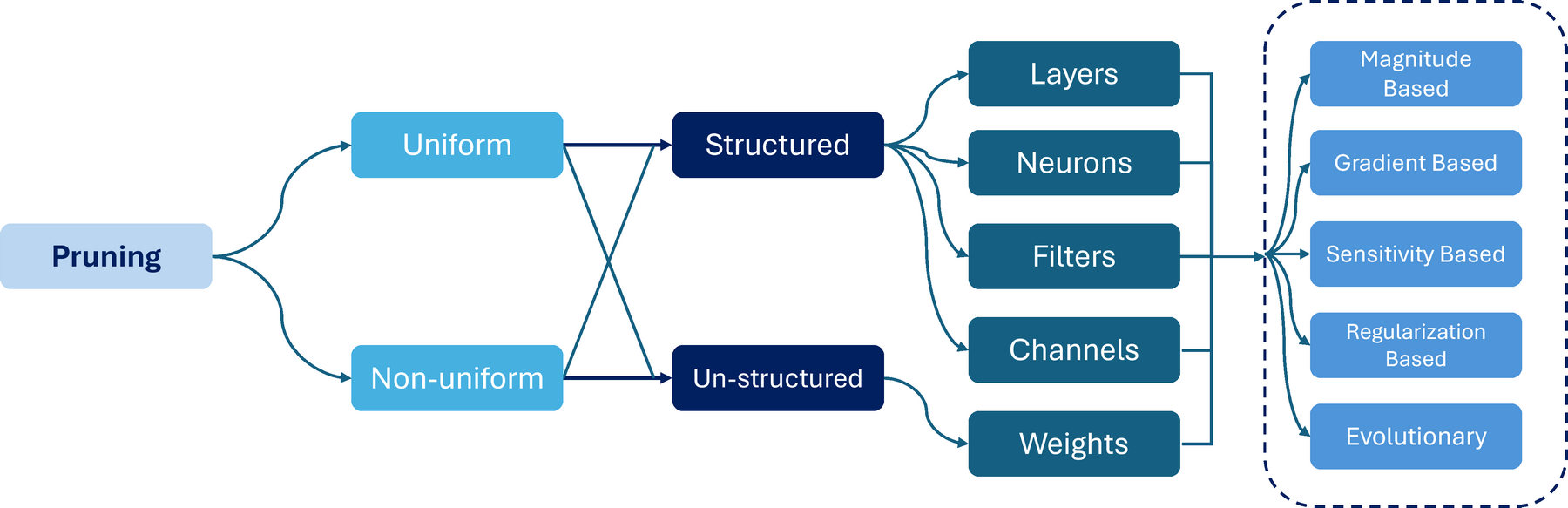

Key takeaway: Provides a comprehensive map of compression methods and open challenges for sustainable AI in xG wireless systems.

Key takeaway: Applies prototypical networks to beam prediction so models adapt to unseen antenna configurations with limited data.

Key takeaway: Introduces structured nonuniform pruning to shrink AoA models while keeping competitive estimation performance.

Key takeaway: Targets resource-constrained deployment with tiny federated WFMs for multitask wireless sensing and communication.

Key takeaway: Demonstrates that early exits can reduce average AMC inference cost while maintaining strong classification quality.

Key takeaway: Adds recoverability-aware routing so early-exit decisions prioritize samples that truly benefit from deeper inference.

Key takeaway: Uses prototypical networks for few-shot AoA estimation with strong adaptation to unseen classes and sparse labels.