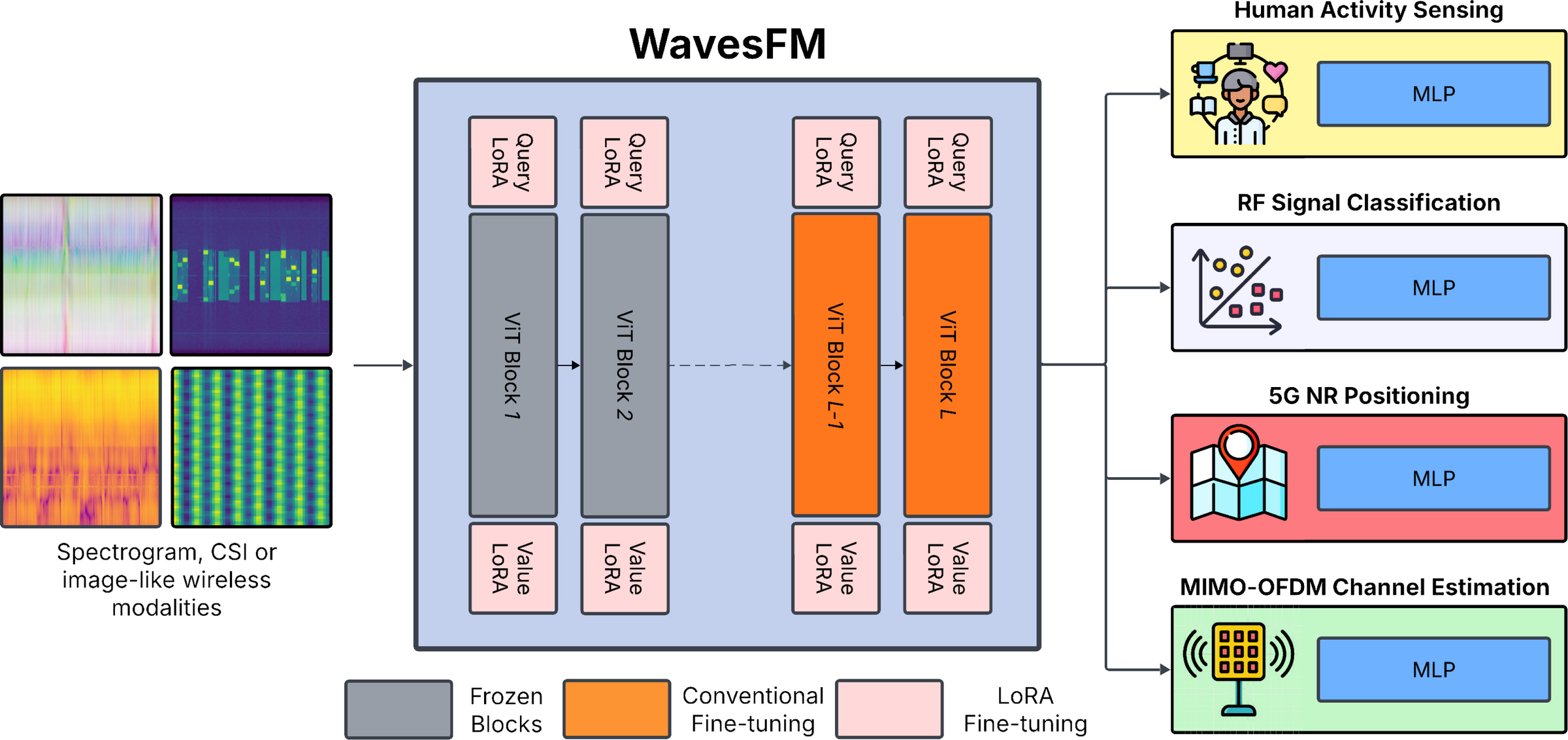

Key takeaway: Introduces WavesFM as a unified wireless foundation model that transfers across sensing, communication, and localization.

AI for Wireless

Pretraining-centric work on general wireless representations across modalities, tasks, and operating conditions.

Key takeaway: Introduces WavesFM as a unified wireless foundation model that transfers across sensing, communication, and localization.

Key takeaway: Shows that transformer architecture and pretraining choices strongly shape downstream transfer in radio foundation models.

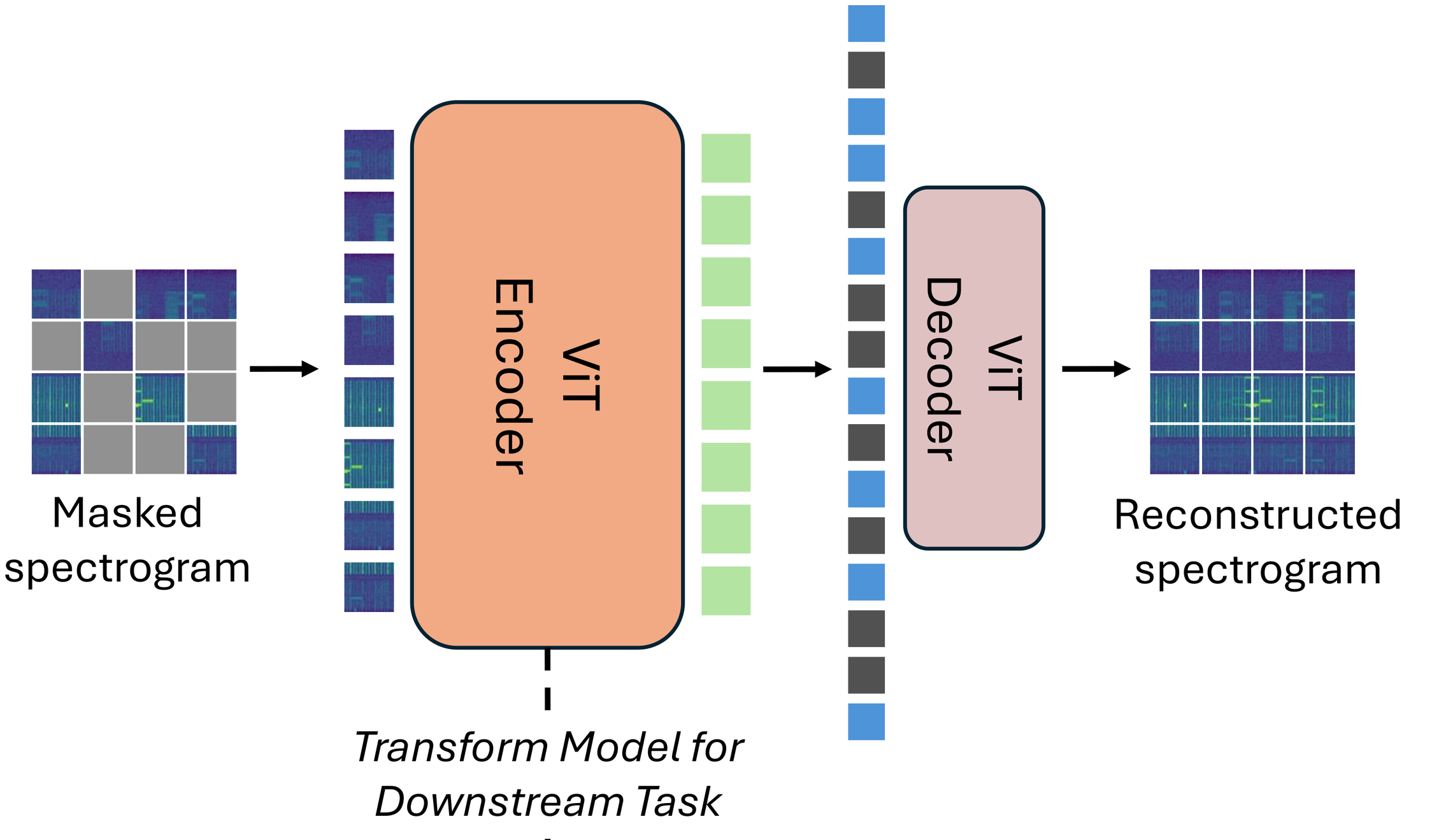

Key takeaway: Extends foundation modeling to raw I/Q streams with self-supervised pretraining and multitask fine-tuning.

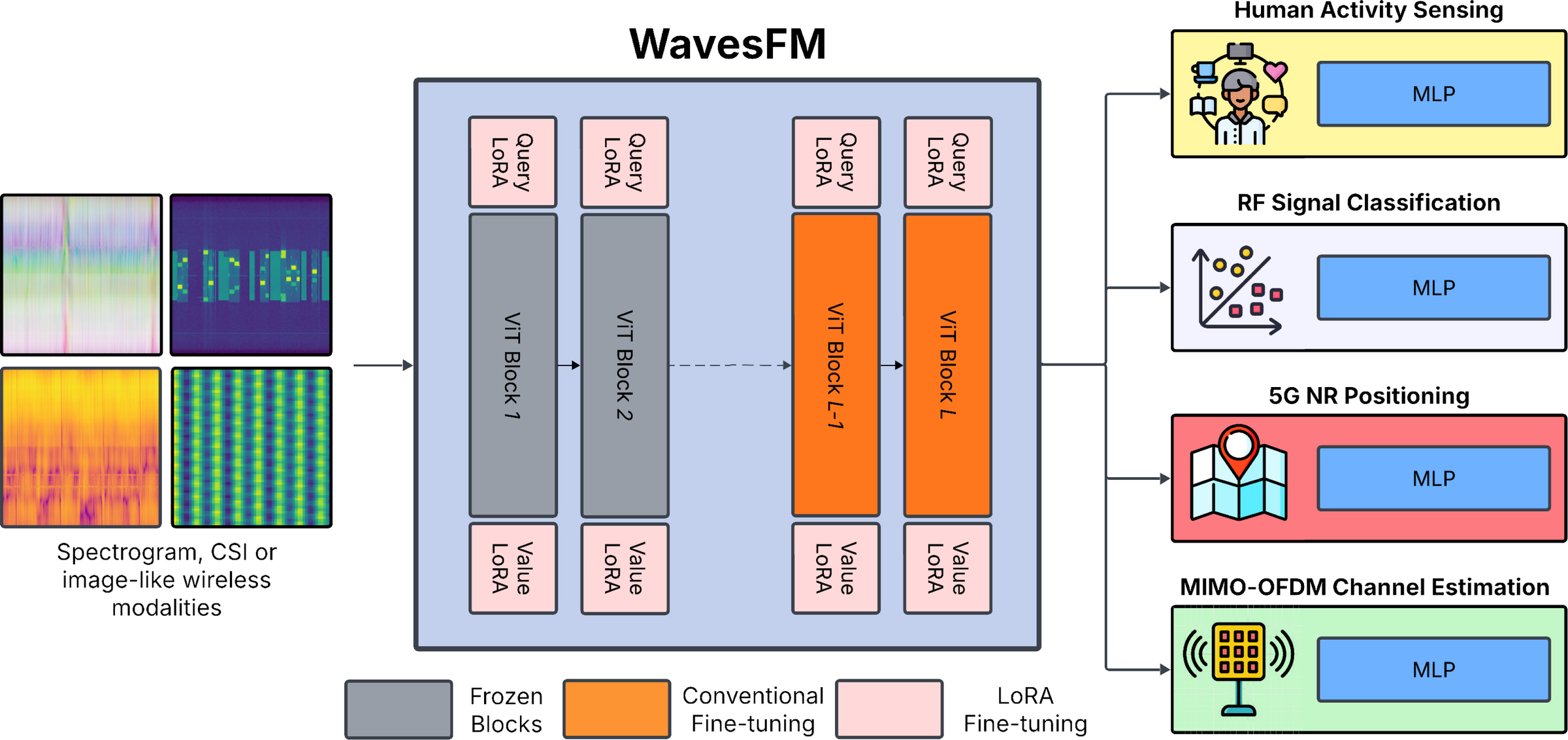

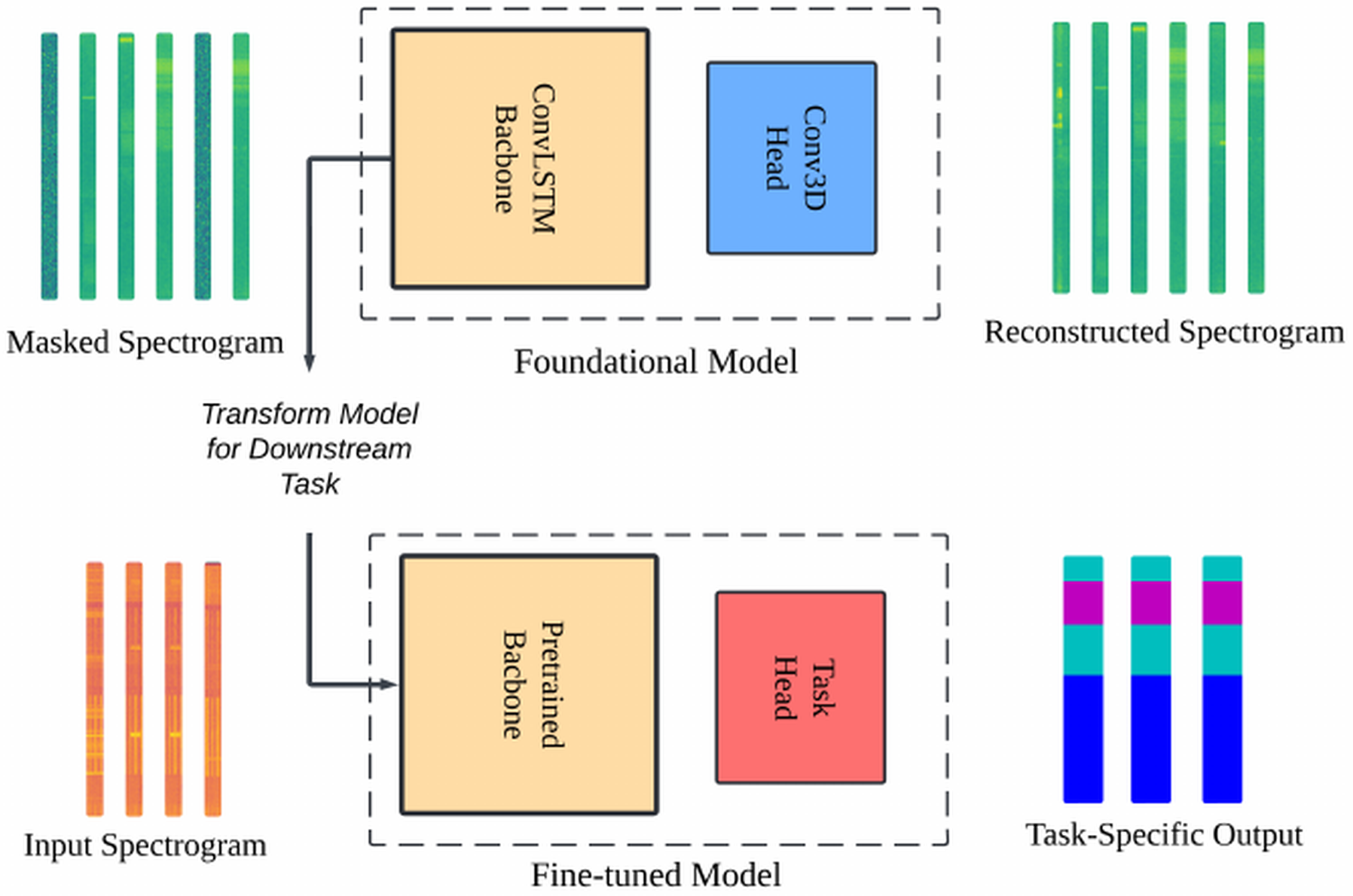

Key takeaway: Demonstrates that unlabeled spectrogram pretraining improves downstream performance and label efficiency.

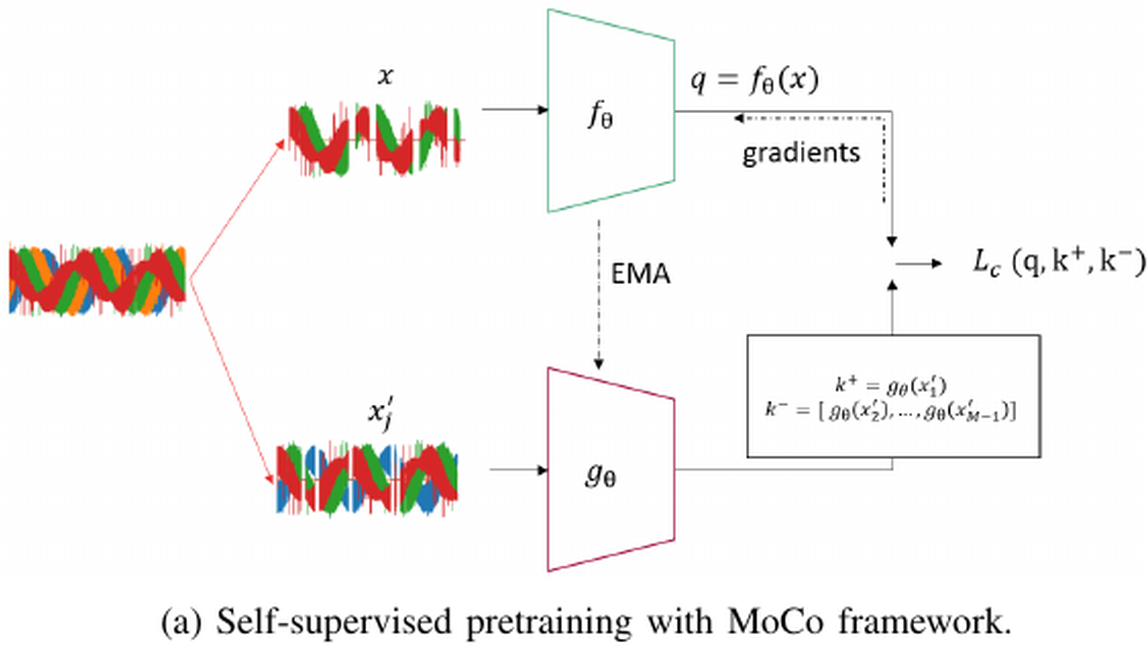

Key takeaway: Finds that one self-supervised encoder can support multiple wireless tasks through shared representations.

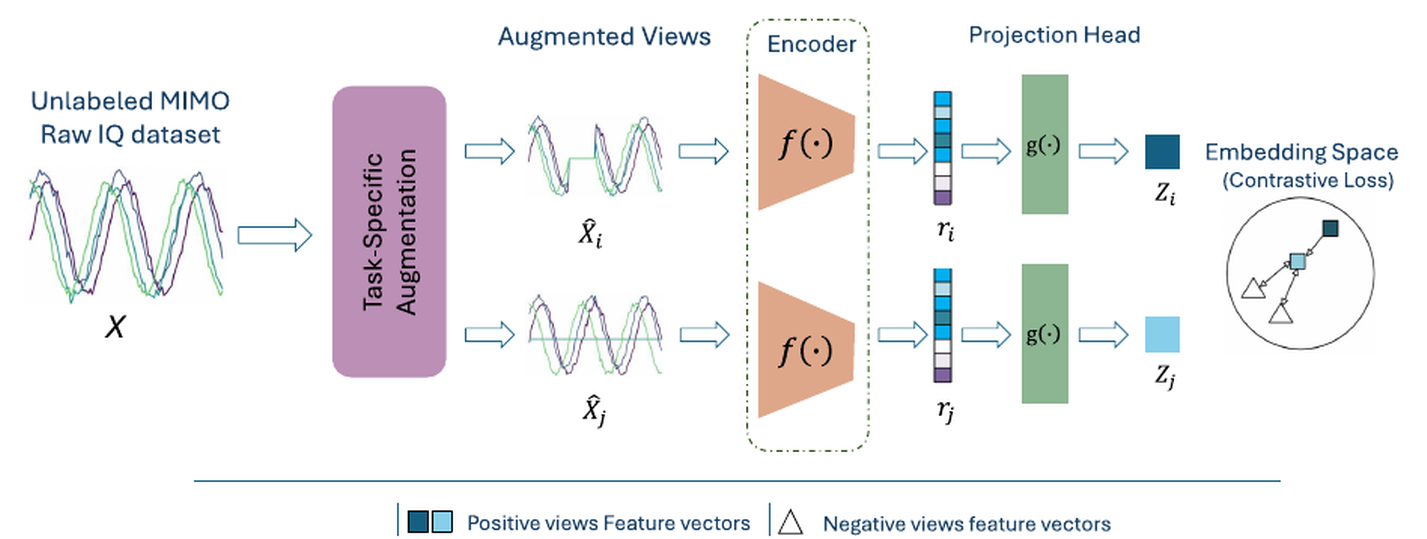

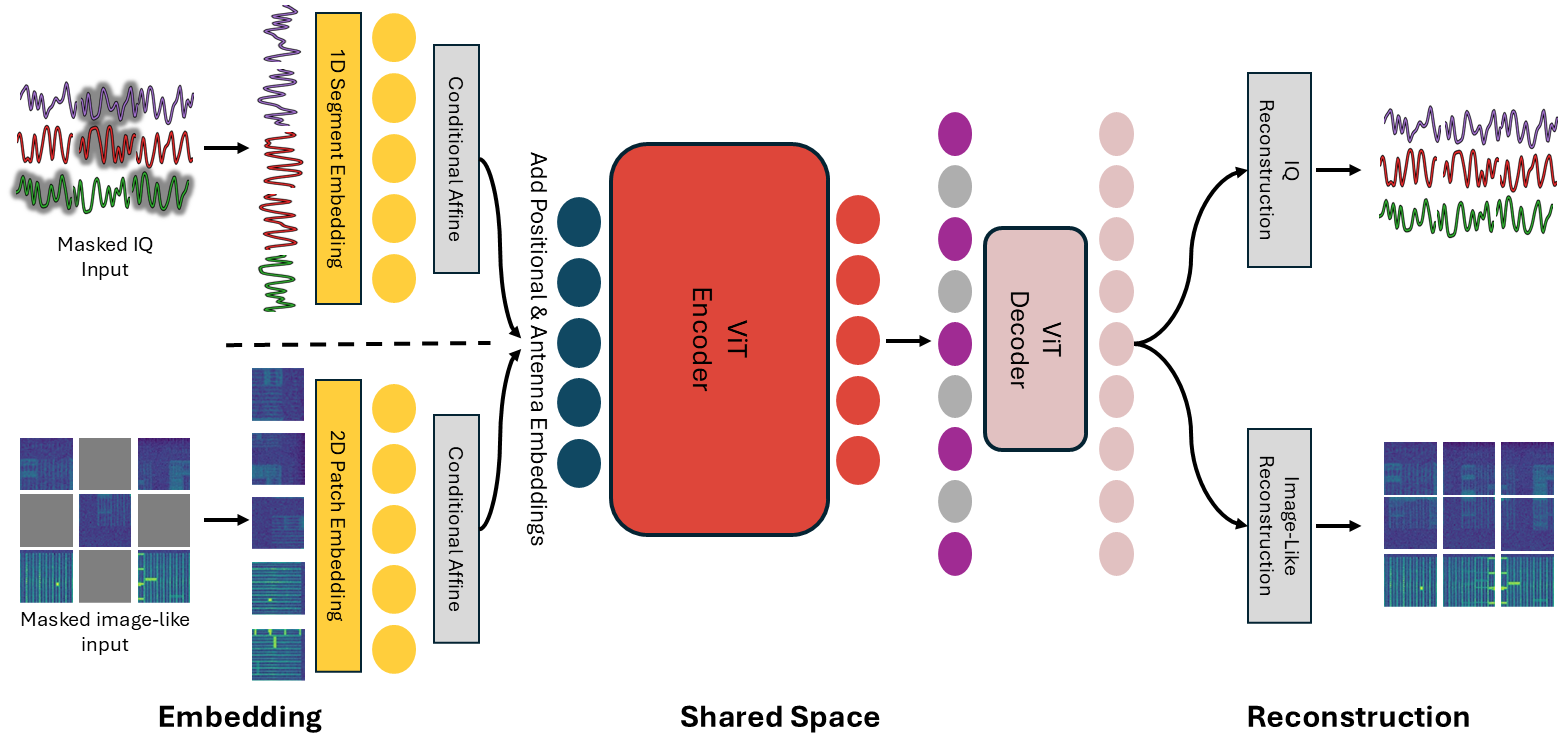

Key takeaway: Builds a multimodal WFM across IQ, spectrogram, CSI, and CIR to improve robustness across tasks and conditions.

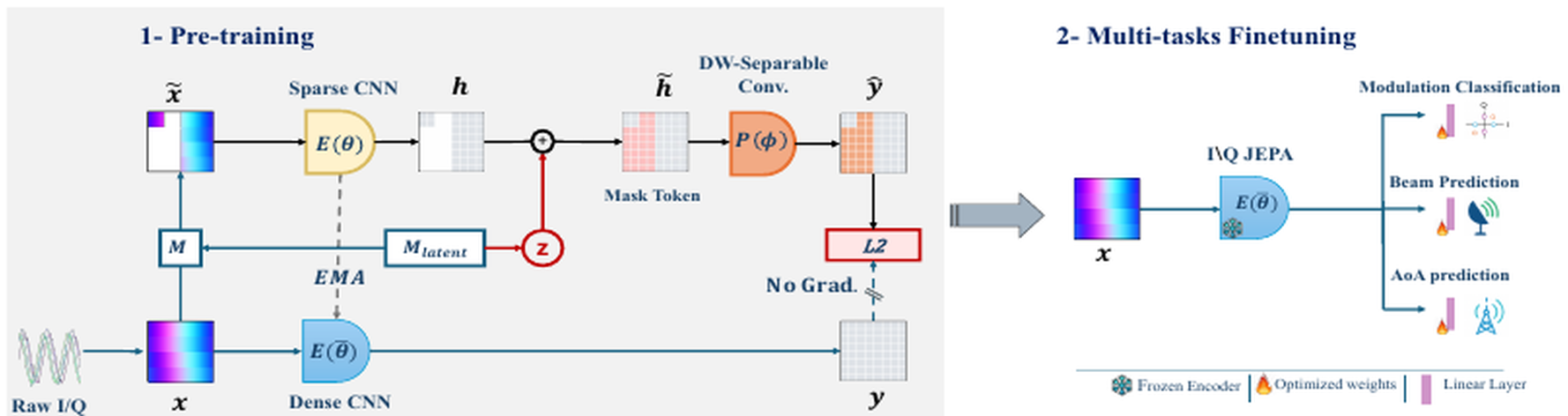

Key takeaway: Applies JEPA-style latent prediction to multi-antenna IQ data and improves transfer on out-of-domain tasks.